After a successful recruitment campaign, our autonomous department has welcomed 25 new members. The team has been working hard developing skills in various subsets of machine learning and artificial intelligence, one of which is reinforcement learning (RL). These skills will be applied in competitions such as MineRL, F1Tenth and FSAI.

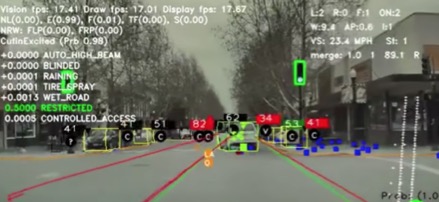

Put simply, reinforcement learning is the science behind decision making. In the context of an autonomous vehicle some of the primary aims would be to prevent a crash and to avoid hitting a wall. All of this is done using deep neural networks, which enable the car (the agent) to detect and localise objects such as pedestrians and other cars in its surroundings (the environment).

With the use of sensors, nearby objects are tracked and the car starts to learn how to manoeuvre around them. This is done by implementing reinforcement learning concepts. The neural network predicts the best action for the car to take (eg. turn right, turn left or do nothing) and then makes these decisions based on the information relayed from the car’s sensors.

The idea of learning based on interaction with a surrounding environment applies not only to people, but to neural networks too. During the training phase of a car’s neural network, goals and rewards systems are developed, with the aim being to obtain as many rewards as possible. Crashing the car would offer a negative reward, so in future the neural network would then make the appropriate decisions to lower the probability of this happening again. This method of trial and error is where the ‘reinforcement’ part of RL comes into play. The agent may fail at doing the task over and over again, but after several iterations it will eventually succeed. Once the task in question has been mastered it is then made more difficult, for example once a wall can be easily identified, obstacles are also added.

This process becomes more complicated when dealing with autonomous race cars. This is due to the additional aim of having the vehicle to race as quickly as possible on any track it’s put on. This requires further considerations to maximise rewards such as steering angle and speed.

The new recruits of our autonomous department have been keen to get involved, with five of them already competing in the MineRL competition. The competition involves building an autonomous agent with the aim of obtaining a diamond in Minecraft using only 4 days of training time. Our team members Eoin Gogarty, Ujjawal Aggarwal, Manus McAuliffe, Evan Mitchell and Kevin Quinn have all been working to source baseline implementations which they then incrementally improved using state of the art methods.

One of the challenges the team faced was the initial difficulty in understanding the concepts and implementations of reinforcement learning. Most of the team members were new to RL so initial meetings were spent giving presentations on relevant topics such as deep Q-learning, the Bellman equation and imitation learning.

The team captain Eoin Gogarty says “we’ve learnt many valuable skills in practically applying reinforcement learning algorithms during this competition. We plan to re-use this new knowledge in other competitions for Formula Trinity Autonomous, including FS AI and F1Tenth”.

The team captain Eoin Gogarty says “we’ve learnt many valuable skills in practically applying reinforcement learning algorithms during this competition. We plan to re-use this new knowledge in other competitions for Formula Trinity Autonomous, including FS AI and F1Tenth”.

Our team is currently preparing for the final round of MineRL. The submission deadline is February 14th and the results will be announced at the AAAI Conference.