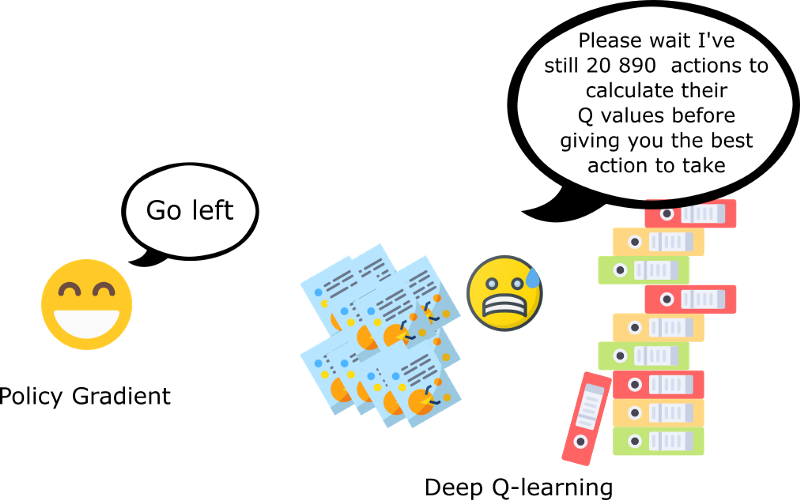

When initially researching reinforcement learning you’ll certainly come across the idea of the Q-learning algorithm. This is a classical reinforcement learning algorithm based on value iteration. By completing an action and then obtaining a reward, the Q-value increases. The aim is to maximise the value function Q by choosing which action could maximise reward.

Policy gradient methods on the other hand are reinforcement learning algorithms based on iteration policy and can solve problems that value-based methods can’t. For instance, in the context of autonomous driving vehicles, it wouldn’t always be suitable to use the Q-learning algorithm. This is due to the fact that there are infinitely many choices of actions eg, every possible steering angle. It wouldn’t be feasible or efficient to output a Q-value for every possible action. Policy-based methods would be more appropriate as the parameters can be adjusted, allowing us to understand and predict the maximum rather than estimating it over and over again.

However, similarly to the Q-learning method the ultimate goal of learning based on strategy iteration is still for the system to obtain the most rewards and minimise obtaining negative rewards. policy gradient attempts to train an agent without explicitly mapping the value for every state-action pair in an environment by taking small steps and updating the policy based on the reward associated with that step. A technique called Monte Carlo policy gradient can be applied in order to derive an optimal policy that maximises the reward for the agent. This involves having the agent run through an entire episode and then update the policy based on the rewards obtained. In the context of autonomous driving the agent would refer to the vehicle.

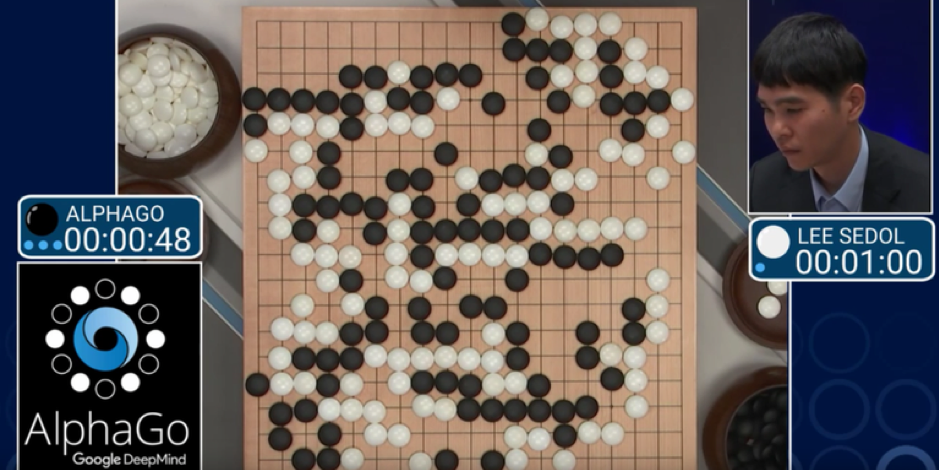

In addition to this, actor-critic methods are also used to optimize the agent policy. This uses two components (a critic for estimating how good an action is from a state, and an actor to optimize the policy based on the critic). One famous example of a project which uses an actor-critic approach is AlphaGo, by DeepMind, which was the first revolutionary AI that beat a top player (Lee Sedol) in the game of Go. It was able to learn from human example games to make the best moves throughout all the games.

OpenAI have also released a different set of policy gradient algorithms in 2017, called Proximal Policy Optimization (PPO). It introduces a few extra steps intended to make the learning process both effective and more stable, while being simpler to implement. Different algorithms such as PPO can be applied to solve many different problems, such as for training a car to race autonomously. In fact, they were able to train a system through self-play to beat professional players at DOTA 2 in a multiplayer team setting, using PPO.

If you would like to play around with some of these algorithms also, do check out Spinning Up by OpenAI (https://spinningup.openai.com/en/latest/index.html), and check out OpenAI Gym (https://gym.openai.com/) to get started! Also, the F1Tenth Gym environment (https://f1tenth-gym.readthedocs.io/en/latest/) is also built on OpenAI Gym, so you can learn to develop your own racing algorithms, while also being able to test out your policy gradient approach to racing!