Have you ever wondered about what makes a Tesla vehicle drive using Autopilot? Or maybe how robots can navigate and explore unknown territory without crashing? How does a computer system even learn new information in the way that humans do? In the autonomous research team, we are working towards designing the fastest and safest racing system, and we would like to briefly introduce to you all the main components that go into the development of a fully autonomous racecar. The following sections are overviews of the roles in the autonomous team, as explained by our researchers.

Have you ever wondered about what makes a Tesla vehicle drive using Autopilot? Or maybe how robots can navigate and explore unknown territory without crashing? How does a computer system even learn new information in the way that humans do? In the autonomous research team, we are working towards designing the fastest and safest racing system, and we would like to briefly introduce to you all the main components that go into the development of a fully autonomous racecar. The following sections are overviews of the roles in the autonomous team, as explained by our researchers.

State Estimation:

The state of a vehicle refers to its current situation and includes its pose, its kinematics and its dynamics. All calculations in the perception-action cycle rely on accurate estimations of the vehicle’s state. However, these estimations are often subject to errors due to noise in sensor readings. In naive state estimation algorithms, this noise can grow the scale of error in the state, to the point where the estimated location of the car on track could be out of bounds, compared to the real ground position. This is where smart state estimation systems kick in. By combining asynchronous readings from multiple sensors through sensor fusion or redundant estimations, you can improve on the reliability of the overall car by having accurate state estimates during the race. An understanding of statistical modelling is key to the design of estimation models.

Path Planning:

Path Planning:

There are many processes that go on when drivers decide to overtake another vehicle on the road, or on the racetrack. The relative position and velocity of the car must be understood. The surrounding obstacles and risk of collision needs to be noted. Then you need to predict how to control the vehicle in order to overtake the front vehicle. Although intuitive to drivers, this is one of the most challenging tasks that an autonomous vehicle needs to do in order to both cooperate with and compete against other cars which would often have unknown strategies and behaviours. Multi-agent interaction is crucial to the success of a race-winning system. Crashes need to be completely avoided, but the strategy needs to be good enough for the racer to beat all the other opponents. This is an interesting aspect of the task of path planning. In considering calculations of optimal raceline and ideal local trajectories, one must design the best method or strategy in order to overcome challenges in both time trials, and multi-agent races.

Control Engineering:

A fully working autonomous vehicle requires a solution that acts upon its understanding of the perceived environment. Control systems are a dedicated part of the vehicle that calculates action outputs, which relies on accurate signal inputs, as well as a reliable, physical model of the vehicle. An example of a control system is the proportional integral derivative (PID) controller. This type of controller acts to correct errors in desired state (from input observations). The speed at which the error is corrected, as well as the stability of vehicle reactions to control outputs, are very important considerations of a smart control system. There are many areas in control engineering that have also been developed. Optimal control and adaptive control theory are important areas that are useful for getting the highest performance out of the vehicle, in spite of models that don’t mimic every aspect of a real vehicle, such as varying road or race conditions.

Perception:

Perception:

In order to make the most informed decisions, one must understand the nature of their surroundings to a good degree. Without meaningful information about the track or external obstacles, the car will fail from the moment it moves from the starting line. It needs a robust perception system in order to plan its trajectory correctly and make its course around the track quickly and accurately, without slipping or crashing. Processing sensor data and learning from it makes up the core of building an understanding of the race environment in real-time. Critical information such as track geometry, distance of car from all obstacles, 3D point cloud, and correct object labels, can all be obtained from the best perception systems.

Areas such as Simultaneous Localization and Mapping (SLAM), deep learning, and computer vision, make up a significant portion of this system. The best perception system can use tools from these to make the quickest computations to create meaningful data from few sensors, while overcoming many variables that reduce performance (such as poor weather conditions, lighting, sensor noise, and more).

Simulation:

Before any system gets implemented into the real car, many simulations need to be run in order to verify its performance and safety. This is the perfect opportunity to gain deeper insight into how the algorithms work, and how to improve the robustness of the systems further. All this can be done without damaging the vehicle’s sensors in the event of unforeseen bugs or worse, potentially being a danger to people. An accurate model of the race needs to be designed and implemented before simulations can be run as intended. Reliable unit tests are crucial to debugging any system that could cause failure in both expected and unexpected race scenarios. Edge cases appear much more frequently when the car is pushed to its limits and optimized to race at full speed against other vehicles. Interdisciplinary work is encouraged in order to successfully meet the needs of other teams to run meaningful simulations, training and experiments. Once every possible scenario is covered during testing, then the full system is ready to be implemented into the vehicle.

Reinforcement Learning:

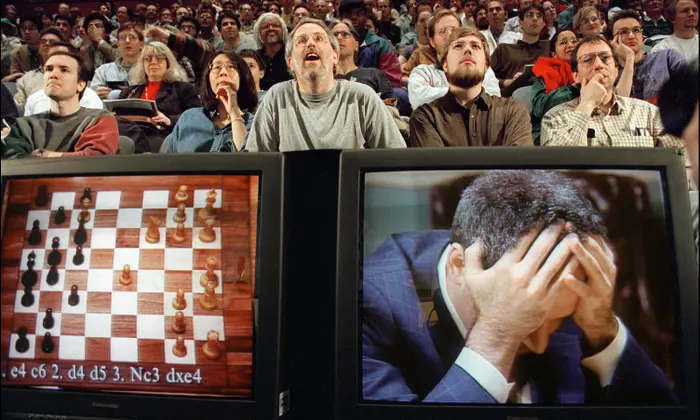

A big inspiration for research is to create an end-to-end autonomous system that can be the fastest at racing in any track or scenario. The dream of AI researchers is to invent a computer that can learn to do tasks better than humans can (pursuing artificial general intelligence, or AGI). Many ground-breaking intelligent agents (like AlphaGo, AlphaZero, and OpenAI Five) have been created to beat world champions in games previously considered impossible for computer systems.

A big inspiration for research is to create an end-to-end autonomous system that can be the fastest at racing in any track or scenario. The dream of AI researchers is to invent a computer that can learn to do tasks better than humans can (pursuing artificial general intelligence, or AGI). Many ground-breaking intelligent agents (like AlphaGo, AlphaZero, and OpenAI Five) have been created to beat world champions in games previously considered impossible for computer systems.

Inspired by the aims of optimal control, reinforcement learning (RL) was invented as a tool to solve any task based on trial-and-error. In reality, optimal control can be seen as one method in reinforcement learning, when modelled as a Markov Decision Process (MDP) that needs to be solved to do a task optimally. Optimal policy determines actions that have the highest expected reward in a given state (from observation). For very complex problems, this is not trivial to solve by purely analytical methods. In modern RL techniques, a system can be designed to increase the probability of actions that lead to positive reward (or are desired), while decreasing the probability of negative reward actions. Policy gradient methods (especially in deep reinforcement learning) aim to optimize the RL agent to output the best actions (such as throttle and steering angle) from inputs (such as LIDAR scan ranges, and/or images) that get passed through a deep network architecture. After many learning episodes, and some failed trials, the aim is to get the vehicle to race as quickly as possible in any track that it’s put on. This is an interesting, yet difficult method that still needs to be solved for reliable, domain-agnostic performance.

Software Infrastructure:

In order to make a fully autonomous vehicle, it relies on reliable software infrastructure to run algorithms in different containers and nodes. Work needs to be done online (iteratively during the race) and offline (for fully defined optimization problems). This again requires planning of an ideal framework system to achieve success in combining both solutions before the race happens. Consideration of available resources for solving algorithmic problems and training, and the cost of running simulations is also important for designing the full software infrastructure. With software libraries and frameworks constantly updating with newer versions with new features, and compatibility issues arising between different racing system packages, decisive action needs to be taken to maintain efficiency and robustness in workflow and algorithm execution. Whether work is done in simulation software, multiple machines, locally, or cloud computing platforms, the software infrastructure needs to consider all these aspects. Fully reliable and maintainable DevOps is key to success for the team in time for race day.

A successful and innovative team requires every single role to work together and to appreciate the wider interdisciplinary effort that goes into making a fully working self-driving car. Whether you’re learning new topics from F1Tenth, published works, maths notes, or online lectures, you will be sure to open up fun, novel ideas that might be just enough to give us a significant edge over other teams, and eventually create new questions for the potential work in autonomous racing still to come.

If any of this kind of work interests you, make sure that you apply to join our team as a researcher before our recruitment deadlines (13 November, 23:59 for this round of applications)! Our mission is to change the way we learn in Trinity, and we hope to get you involved in our own autonomous racing projects soon!